java自己手動控制kafka的offset操作

之前使用kafka的KafkaStream,讓每個消費者和對應的patition建立對應的流來讀取kafka上面的數據,如果comsumer得到數據,那么kafka就會自動去維護該comsumer的offset,例如在獲取到kafka的消息后正準備入庫(未入庫),但是消費者掛了,那么如果讓kafka自動去維護offset,它就會認為這條數據已經被消費了,那么會造成數據丟失。

但是kafka可以讓你自己去手動提交,如果在上面的場景中,那么需要我們手動commit,如果comsumer掛了 那么程序就不會執行commit這樣的話 其他同group的消費者又可以消費這條數據,保證數據不丟,先要做如下設置:

//設置不自動提交,自己手動更新offsetproperties.put('enable.auto.commit', 'false');

使用如下api提交:

consumer.commitSync();注意:

剛做了個測試,如果我從kafka中取出5條數據,分別為1,2,3,4,5,如果消費者在執行一些邏輯在執行1,2,3,4的時候都失敗了未提交commit,然后消費5做邏輯成功了提交了commit,那么offset也會被移動到5那一條數據那里,1,2,3,4 相當于也會丟失

如果是做消費者取出數據執行一些操作,全部都失敗的話,然后重啟消費者,這些數據會從失敗的時候重新開始讀取

所以消費者還是應該自己做容錯機制

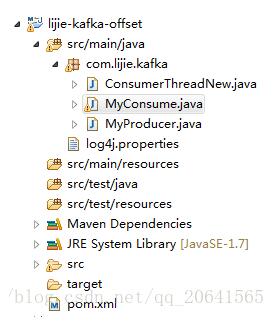

測試項目結構如下:

其中ConsumerThreadNew類:

package com.lijie.kafka;import java.util.ArrayList;import java.util.Arrays;import java.util.List;import org.apache.kafka.clients.consumer.ConsumerRecord;import org.apache.kafka.clients.consumer.ConsumerRecords;import org.apache.kafka.clients.consumer.KafkaConsumer;import org.slf4j.Logger;import org.slf4j.LoggerFactory;/** * * * @Filename ConsumerThreadNew.java * * @Description * * @Version 1.0 * * @Author Lijie * * @Email lijiewj39069@touna.cn * * @History *<li>Author: Lijie</li> *<li>Date: 2017年3月21日</li> *<li>Version: 1.0</li> *<li>Content: create</li> * */public class ConsumerThreadNew implements Runnable { private static Logger LOG = LoggerFactory.getLogger(ConsumerThreadNew.class); //KafkaConsumer kafka生產者 private KafkaConsumer<String, String> consumer; //消費者名字 private String name; //消費的topic組 private List<String> topics; //構造函數 public ConsumerThreadNew(KafkaConsumer<String, String> consumer, String topic, String name) { super(); this.consumer = consumer; this.name = name; this.topics = Arrays.asList(topic); } @Override public void run() { consumer.subscribe(topics); List<ConsumerRecord<String, String>> buffer = new ArrayList<>(); // 批量提交數量 final int minBatchSize = 1; while (true) { ConsumerRecords<String, String> records = consumer.poll(100); for (ConsumerRecord<String, String> record : records) {LOG.info('消費者的名字為:' + name + ',消費的消息為:' + record.value());buffer.add(record); } if (buffer.size() >= minBatchSize) {//這里就是處理成功了然后自己手動提交consumer.commitSync();LOG.info('提交完畢');buffer.clear(); } } }}

MyConsume類如下:

package com.lijie.kafka;import java.util.Properties;import java.util.concurrent.ExecutorService;import java.util.concurrent.Executors;import org.apache.kafka.clients.consumer.KafkaConsumer;import org.slf4j.Logger;import org.slf4j.LoggerFactory;/** * * * @Filename MyConsume.java * * @Description * * @Version 1.0 * * @Author Lijie * * @Email lijiewj39069@touna.cn * * @History *<li>Author: Lijie</li> *<li>Date: 2017年3月21日</li> *<li>Version: 1.0</li> *<li>Content: create</li> * */public class MyConsume { private static Logger LOG = LoggerFactory.getLogger(MyConsume.class); public MyConsume() { // TODO Auto-generated constructor stub } public static void main(String[] args) { Properties properties = new Properties(); properties.put('bootstrap.servers', '10.0.4.141:19093,10.0.4.142:19093,10.0.4.143:19093'); //設置不自動提交,自己手動更新offset properties.put('enable.auto.commit', 'false'); properties.put('auto.offset.reset', 'latest'); properties.put('zookeeper.connect', '10.0.4.141:2181,10.0.4.142:2181,10.0.4.143:2181'); properties.put('session.timeout.ms', '30000'); properties.put('key.deserializer', 'org.apache.kafka.common.serialization.StringDeserializer'); properties.put('value.deserializer', 'org.apache.kafka.common.serialization.StringDeserializer'); properties.put('group.id', 'lijieGroup'); properties.put('zookeeper.connect', '192.168.80.123:2181'); properties.put('auto.commit.interval.ms', '1000'); ExecutorService executor = Executors.newFixedThreadPool(5); //執行消費 for (int i = 0; i < 7; i++) { executor.execute(new ConsumerThreadNew(new KafkaConsumer<String, String>(properties),'lijietest', '消費者' + (i + 1))); } }}

MyProducer類如下:

package com.lijie.kafka;import java.util.Properties;import org.apache.kafka.clients.producer.KafkaProducer;import org.apache.kafka.clients.producer.ProducerRecord;/** * * * @Filename MyProducer.java * * @Description * * @Version 1.0 * * @Author Lijie * * @Email lijiewj39069@touna.cn * * @History *<li>Author: Lijie</li> *<li>Date: 2017年3月21日</li> *<li>Version: 1.0</li> *<li>Content: create</li> * */public class MyProducer { private static Properties properties; private static KafkaProducer<String, String> pro; static { //配置 properties = new Properties(); properties.put('bootstrap.servers', '10.0.4.141:19093,10.0.4.142:19093,10.0.4.143:19093'); //序列化類型 properties .put('value.serializer', 'org.apache.kafka.common.serialization.StringSerializer'); properties.put('key.serializer', 'org.apache.kafka.common.serialization.StringSerializer'); //創建生產者 pro = new KafkaProducer<>(properties); } public static void main(String[] args) throws Exception { produce('lijietest'); } public static void produce(String topic) throws Exception { //模擬message // String value = UUID.randomUUID().toString(); for (int i = 0; i < 10000; i++) { //封裝message ProducerRecord<String, String> pr = new ProducerRecord<String, String>(topic, i + ''); //發送消息 pro.send(pr); Thread.sleep(1000); } }}

pom文件如下:

<project xmlns='http://maven.apache.org/POM/4.0.0' xmlns:xsi='http://www.w3.org/2001/XMLSchema-instance' xsi:schemaLocation='http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd'> <modelVersion>4.0.0</modelVersion> <groupId>lijie-kafka-offset</groupId> <artifactId>lijie-kafka-offset</artifactId> <version>0.0.1-SNAPSHOT</version> <dependencies> <dependency> <groupId>org.apache.kafka</groupId> <artifactId>kafka_2.11</artifactId> <version>0.10.1.1</version> </dependency> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-common</artifactId> <version>2.2.0</version> </dependency> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-hdfs</artifactId> <version>2.2.0</version> </dependency> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-client</artifactId> <version>2.2.0</version> </dependency> <dependency> <groupId>org.apache.hbase</groupId> <artifactId>hbase-client</artifactId> <version>1.0.3</version> </dependency> <dependency> <groupId>org.apache.hbase</groupId> <artifactId>hbase-server</artifactId> <version>1.0.3</version> </dependency> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-hdfs</artifactId> <version>2.2.0</version> </dependency> <dependency> <groupId>jdk.tools</groupId> <artifactId>jdk.tools</artifactId> <version>1.7</version> <scope>system</scope> <systemPath>${JAVA_HOME}/lib/tools.jar</systemPath> </dependency> <dependency> <groupId>org.apache.httpcomponents</groupId> <artifactId>httpclient</artifactId> <version>4.3.6</version> </dependency> </dependencies> <build> <plugins> <plugin><groupId>org.apache.maven.plugins</groupId><artifactId>maven-compiler-plugin</artifactId><configuration> <source>1.7</source> <target>1.7</target></configuration> </plugin> </plugins> </build></project>

補充:kafka javaAPI 手動維護偏移量

我就廢話不多說了,大家還是直接看代碼吧~

package com.kafka;import kafka.javaapi.PartitionMetadata;import kafka.javaapi.consumer.SimpleConsumer;import org.apache.kafka.clients.consumer.ConsumerRecord;import org.apache.kafka.clients.consumer.ConsumerRecords;import org.apache.kafka.clients.consumer.KafkaConsumer;import org.apache.kafka.clients.consumer.OffsetAndMetadata;import org.apache.kafka.common.TopicPartition;import org.junit.Test;import java.util.*;public class ConsumerManageOffet {//broker的地址,//與老版的kafka的區別是,新版本的kafka把偏移量保存到了broker,而老版本的是把偏移量保存到了zookeeper中//所以在讀取數據時,應當設置broker的地址 private static String ips = '192.168.136.150:9092,192.168.136.151:9092,192.168.136.152:9092'; public static void main(String[] args) { Properties props = new Properties(); props.put('bootstrap.servers',ips); props.put('group.id','test02'); props.put('auto.offset.reset','earliest'); props.put('max.poll.records','10'); props.put('key.deserializer','org.apache.kafka.common.serialization.StringDeserializer'); props.put('value.deserializer','org.apache.kafka.common.serialization.StringDeserializer'); KafkaConsumer<String,String> consumer = new KafkaConsumer<>(props); consumer.subscribe(Arrays.asList('my-topic')); System.out.println('---------------------'); while(true){ ConsumerRecords<String,String> records = consumer.poll(10); System.out.println('+++++++++++++++++++++++'); for(ConsumerRecord<String,String> record: records){System.out.println('---');System.out.printf('offset=%d,key=%s,value=%s%n',record.offset(), record.key(),record.value()); } } } //手動維護偏移量 @Test public void autoManageOffset2(){ Properties props = new Properties(); //broker的地址 props.put('bootstrap.servers',ips); //這是消費者組 props.put('group.id','groupPP'); //設置消費的偏移量,如果以前消費過則接著消費,如果沒有就從頭開始消費 props.put('auto.offset.reset','earliest'); //設置自動提交偏移量為false props.put('enable.auto.commit','false'); //設置Key和value的序列化 props.put('key.deserializer', 'org.apache.kafka.common.serialization.StringDeserializer'); props.put('value.deserializer', 'org.apache.kafka.common.serialization.StringDeserializer'); //new一個消費者 KafkaConsumer<String, String> consumer = new KafkaConsumer<>(props); //指定消費的topic consumer.subscribe(Arrays.asList('my-topic')); while(true){ ConsumerRecords<String, String> records = consumer.poll(1000); //通過records獲取這個集合中的數據屬于那幾個partition Set<TopicPartition> partitions = records.partitions(); for(TopicPartition tp : partitions){//通過具體的partition把該partition中的數據拿出來消費List<ConsumerRecord<String, String>> partitionRecords = records.records(tp);for(ConsumerRecord r : partitionRecords){ System.out.println(r.offset() +' '+r.key()+' '+r.value());}//獲取新這個partition中的最后一條記錄的offset并加1 那么這個位置就是下一次要提交的offsetlong newOffset = partitionRecords.get(partitionRecords.size() - 1).offset() + 1;consumer.commitSync(Collections.singletonMap(tp,new OffsetAndMetadata(newOffset))); } } }}

以上為個人經驗,希望能給大家一個參考,也希望大家多多支持好吧啦網。如有錯誤或未考慮完全的地方,望不吝賜教。

相關文章:

網公網安備

網公網安備